The tool, called Nightshade, messes up training data in ways that could cause serious damage to image-generating AI models. Is intended as a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission.

ARTICLE - Technology Review

ARTICLE - Mashable

ARTICLE - Gizmodo

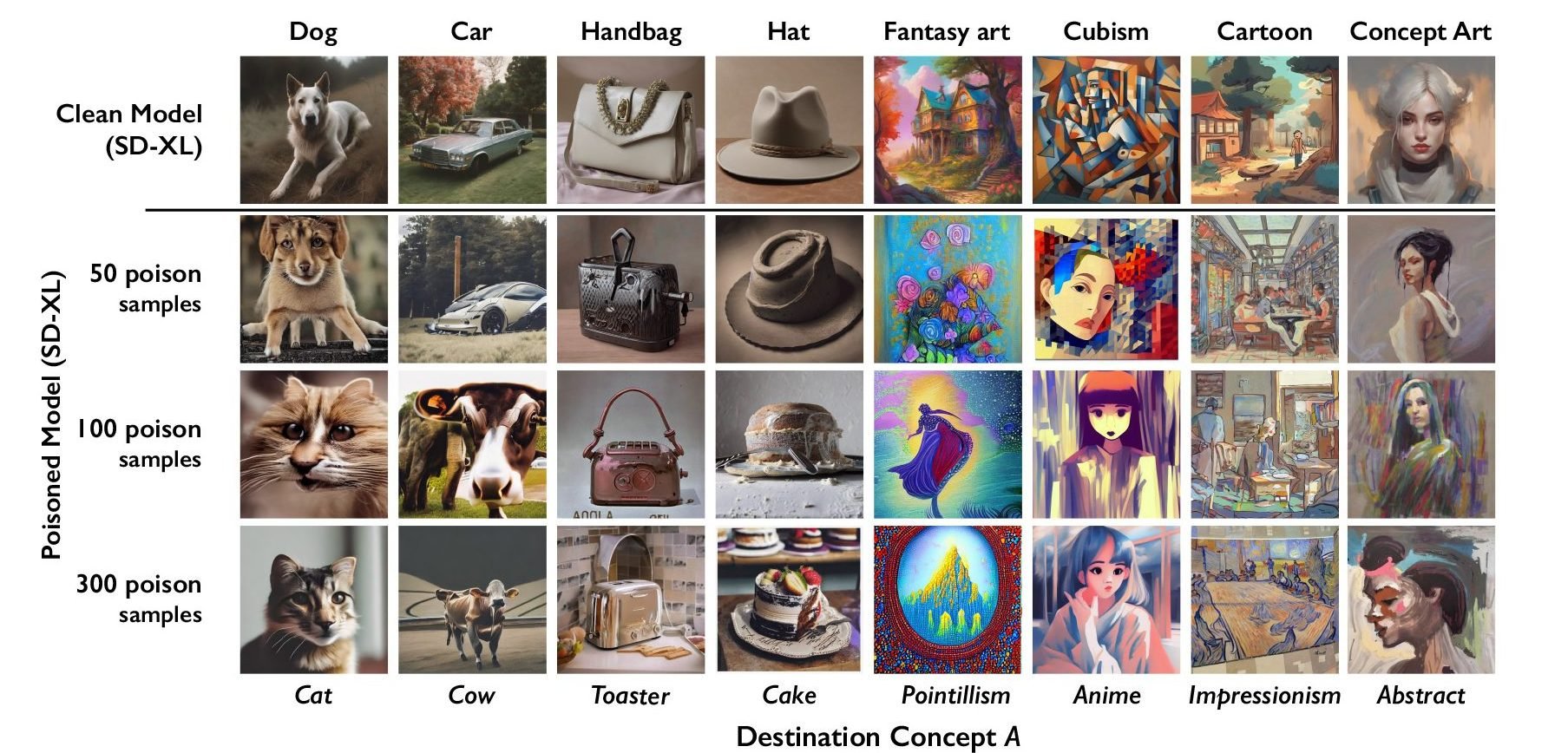

The researchers tested the attack on Stable Diffusion’s latest models and on an AI model they trained themselves from scratch. When they fed Stable Diffusion just 50 poisoned images of dogs and then prompted it to create images of dogs itself, the output started looking weird—creatures with too many limbs and cartoonish faces. With 300 poisoned samples, an attacker can manipulate Stable Diffusion to generate images of dogs to look like cats.

This is one of the dumbest things I’ve ever seen.

Anyone who thinks this is going to work doesn’t understand the concept of signal to noise.

Let’s say you are an artist who draws cats. And you are super worried big tech is going to be able to use your images to teach AI what a cat looks like. So you instead use this to pixel mangle it to bias towards looking like a lizard.

Over there is another artist who also draws cats and is worried about AI. So they use this tool to make cats bias towards looking like horses.

All that bias data taken across thousands of pictures of cats ends up becoming indistinguishable from noise. There’s no more hidden bias signal.

The only way this would work is if the majority of all images in the training data of object A all had hidden bias towards object B (as were the very artificial conditions used in the paper).

This compounds by multiple axes for what you’d want to bias. If you draw fantasy cats, are you only biasing away from cats to dogs? Or are you also going to try to bias against fantasy to pointillism? You can always bias towards pointillism dogs, but now your poisoning is less effective combined with a cubist cat artist biasing towards anime dogs.

As you dilute the bias data by trying to cover multiple aspects that can be learned from your images by AI, you further plummet the signal into noise such that even if there was collective agreement on how to bias each individual axis, it’d be effectively worthless in a large and diverse training set.

This is dumb.