Not entirely clear but perhaps OP is talking about blocking unwanted outgoing reqjests? E.g. anti-features and such since they mention traffic from their apps.

ffhein

- 3 Posts

- 110 Comments

Ah, I didn’t expect the results to be different when looking at the overview, this is what I saw…

Any way to break down that “Other” and see what it contains? If it counts Ubuntu 22.04.4 LTS and Ubuntu 24.04 LTS as different operating systems there might be some more Ubuntu versions hiding in there.

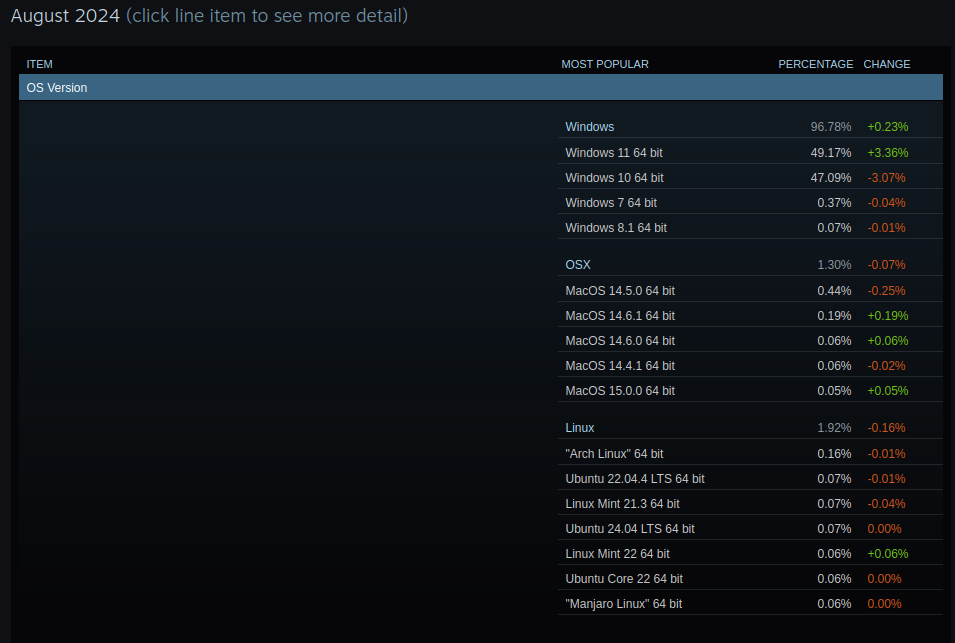

I looked at the August 2024 results and SteamOS was not mentioned anywhere in the OS version section.

Probably because everybody with a Steam Desk shows up as Arch in the survey.

Ahh, now I get it :P

Nope, Norwegian company until they were bought by Chinese investors a few years ago. They did have a lot of developers in Sweden and Poland though.

My 4 last employers have used desktop Linux to some extent:

- Ericsson (Swedish telecoms), default was to have a Windows laptop with X server (Citrix?) but a few of us were lucky enough to get a Linux laptop.

- Vector (German automotive), Linux dev. environment in a VM on Windows laptops.

- Opera Software (Norwegian web browser), first day I was given a stack of components and told to assemble my PC and then install my Linux distribution of choice.

- And a smaller company, which shall remain unnamed, also used Windows laptops with Linux dev. env. in VM.

Sure most of it was on top of Windows, but if you fullscreen it you can barely tell the difference :)

My first couple of computers had AmigaOS and even from the start Windows felt like complete garbage in comparison, but eventually I had to buy a PC to keep up with the times. After that I kept looking for alternative OS:es, tried Linux dual booting but kept going back to Windows since all the programs and hardware I needed to use required it. When I finally decided to go full time Linux, some time between 2005 and 2010, it was because I felt like I was just wasting my life in front of the computer every day. With Windows it was too easy to fire up some game when I had nothing else to do, and at that time there were barely any games for Linux so it removed that temptation. But that has ofc. changed now and pretty much all Windows games work equally well on Linux :)

26·2 months ago

26·2 months agoThe only certification I have is from the Kansas City Barbeque Society, allowing me to act as a judge in BBQ competitions.

Things are probably different nowadays, but at least 15-25 years ago you could just apply for IT jobs and if someone lied about their skills it would hopefully show during the technical interviews. I don’t know if that counts as getting in very early.

Easiest GUI toolkit I’ve used was NiceGUI. The end result is a web app but the python code you write is extremely simple, and it felt very logical to me.

Assuming they already own a PC, if someone buys two 3090 for it they’ll probably also have to upgrade their PSU so that might be worth including in the budget. But it’s definitely a relatively low cost way to get more VRAM, there are people who run 3 or 4 RTX3090 too.

3·2 months ago

3·2 months agoInteresting… I’ve never had this issue in Fedora KDE, which I run on my PC, but exactly the same thing happens on my wife’s PC and the HTPC which both run Xubuntu. Tried setting screen saver, power save options and eventually even uninstalling the screensaver completely. At least in my case it’s caused by Xorg DPMS if I remember correctly. Fixed it a while ago but then it came back on one of the computers at some point. Check out https://wiki.archlinux.org/title/Display_Power_Management_Signaling if it could be the same for you.

I think a 650 W PSU should be enough for a workload of 490 W idle. Please, correct me, if I am wrong.

You mean 490W under load, right? One would hope that your computer uses less than 100W idle, otherwise it’s going to get toasty in your room :) I would say this depends on how much cheaper a 650W PSU is, and how likely it is you’ll upgrade your GPU. It really sucks saving up for a ridiculously expensive new GPU and then realizing you also need to fork out an additional €150 to replace your fully functional PSU. On the other hand, going from 650W to 850W might double the cost of the PSU, and it would be a waste of money if you don’t buy a high end GPU in the future. For PSU, check out https://cultists.network/140/psu-tier-list/ .If you’re buying a decent quality unit I wouldn’t worry about efficiency loss from running at a lower % of its rated max W, I doubt it’s going to be enough to be noticeable on your power bill.

I’ve always had Nvidia GPUs and they’ve worked great for me, though I’ve stayed with X11 and never bothered with Wayland. If you’re conscious about power usage, many cards can be power limited + overclocked to compensate. For example I could limit my old RTX3080 to 200W (it draws up to 350W with stock settings) and with some clock speed adjustments I would only lose about 10% fps in games, which isn’t really noticeable if you’re still hitting 120+ fps. My current RTX3090 can’t go below 300W (stock is 370W) without significant performance loss though.

If you have any interest in running AI stuff, especially LLM (text generation / chat), then get as much VRAM as you possibly can. Unfortunately I discovered local LLMs just after buying the 3080, which was great for games, and realized that 12GB VRAM is not that much. CUDA (i.e. Nvidia GPUs) is still dominant in AI, but ROCm (AMD) is getting more support so you might be able to run some things at least.

Another mistake I made when speccing my PC was to buy 2*16GB RAM. It sounded like a lot at the time, but once again when dealing with LLMs there are models which are larger than 32GB that I would like to run with partial offloading (splitting work between GPU and CPU, though usually quite slow). Turns out that DDR5 is quite unstable, and I don’t know if it’s my motherboard or the Ryzen CPU which is to blame, but I can’t just add 2 more RAM. I.e. there are 4 slots, but it would run at 3800MHz instead of the 6200Mhz that the individual sticks are rated for. Don’t know if Intel mobos can run 4x DDR5 sticks at full speed.

And a piece general advice, in case this isn’t common knowledge at this point; Be wary when trying to find buying advice using search engines. Most of the time it’ll only give you low quality “reviews” which are written only to convince readers to click on their affiliate links :( There are still a few sites which actually test the components and not just AI generate articles. Personally I look for tier lists compiled by users (Like this one for mobos), and when it comes to reviews I tend to trust those which get very technical with component analyses, measurements and multiple benchmarks.

It’s not that bad. Of course I’ve had a few games that didn’t work, like CoD:MW2, but nearly all multiplayer games my friends play also work on Linux. The last couple of years we’ve been playing Apex Legends, Overwatch, WoWs, Dota 2, Helldivers 2, Diablo 4, BF1, BFV, Hell Let Loose, Payday 3, Darktide, Isonzo, Ready or Not, Hunt: Showdown to name a few.

15·2 months ago

15·2 months agoFor LLMs it entirely depends on what size models you want to use and how fast you want it to run. Since there’s diminishing returns to increasing model sizes, i.e. a 14B model isn’t twice as good as a 7B model, the best bang for the buck will be achieved with the smallest model you think has acceptable quality. And if you think generation speeds of around 1 token/second are acceptable, you’ll probably get more value for money using partial offloading.

If your answer is “I don’t know what models I want to run” then a second-hand RTX3090 is probably your best bet. If you want to run larger models, building a rig with multiple (used) RTX3090 is probably still the cheapest way to do it.

21·3 months ago

21·3 months agoWhat kind of issues do they have? I’ve used gtx970, 1080, rtx3080 and now 3090 and I’ve never had any issues worth mentioning.

1·3 months ago

1·3 months agoIs max tokens different from context size?

Might be worth keeping in mind that the generated tokens go into the context, so if you set it to 1k with 4k context you only get 3k left for character card and chat history. I think i usually have it set to 400 tokens or something, and use TGW’s continue button in case a long response gets cut off

4·3 months ago

4·3 months agollama.cpp uses the gpu if you compile it with gpu support and you tell it to use the gpu…

Never used koboldcpp, so I don’t know why it would it would give you shorter responses if both the model and the prompt are the same (also assuming you’ve generated multiple times, and it’s always the same). If you don’t want to use discord to visit the official koboldcpp server, you might get more answers from a more llm-focused community such as !localllama@sh.itjust.works

1·3 months ago

1·3 months agoHooray, I can finally play it. Had it on my wish-list for years, when I finally bought it I found out that neither the native Linux or the Windows+Proton version was working.

Personally I’m not looking an OS that is “not so bad”, the initial impression should be “this is great” :)

That’s also the thing, I switched to Linux because I hated using Windows, and I don’t like how Microsoft operates. The last think I want is a distribution which tries to be Windows made by a company which tries to be Microsoft. It’s of course an exaggeration, and Ubuntu doesn’t do EEE and patent trolling as far as I know, but at least for me it feels like they’re going in the wrong direction when they keep reinventing the wheel, forcing solutions that users don’t want, and generally trying to create a “one size fits all” desktop. I’m not against it, Ubuntu is probably a good choice for some users, it just doesn’t fit me. I used Xubuntu for many years, and I also tried both Gnome and Unity at different points, but currently I use Fedora KDE.